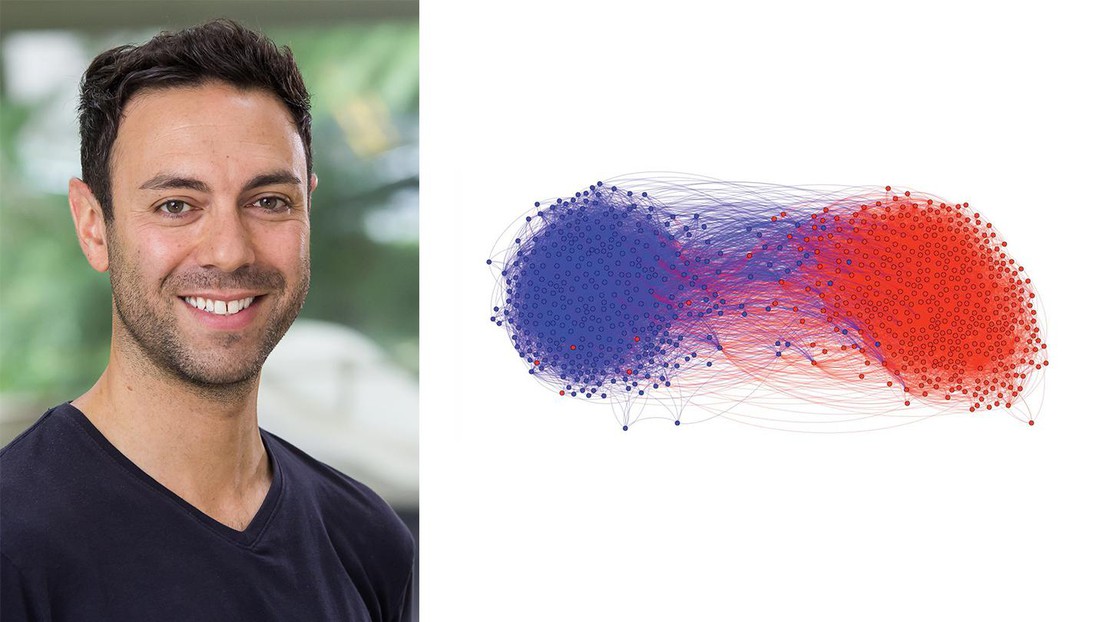

Emmanuel Abbé. Credit: EPFL-DR

Emmanuel Abbé is the new Chair of Mathematical Data Science at EPFL. His work answers fundamental questions in machine learning and information theory, and in particular on community detection.

Sociologists study the patterns of everyday social interactions and relationships by structuring society in a network. In this network, every item is represented by a dot - or "node" - and each interaction by lines between dots. Networks of interacting items are what we know as graphs.

One of the main points of interest in such networks is to figure out which nodes are "alike"; for example, co-workers or family members. When similar nodes group together they begin to form what are called "communities", but detecting a "community" is a complex and key problem. It is crucial, for example, when studying protein-protein interactions or gene expression in cells, or for performing medical diagnosis and finding patterns in social behavior.

The most basic task of community detection consists in partitioning the nodes of a graph into clusters that are more densely connected, e.g. members of a sport club. Having grown tremendously since the 1980's, the field of community detection today employs a remarkable diversity of models and algorithms developed in different scientific communities such as machine-learning and computer science.

Community questions

"Despite this growth, there are still several fundamental questions," says Professor Emmanuel Abbé, EPFL's Chair of Mathematical Data Science, (Schools of Basic Sciences and Computer and Communication Sciences). His work focuses on addressing persistent problems in community detection.

The first problem has to do with the validity of detected communities in graphs. "Algorithms may output communities, but are these meaningful or artefacts?" asks Abbé. Another question involves the reliability of the field's current methods: "Can we always extract the communities when they are present? Can we extract them fully or partially?" And finally, there's quality control: "What are good benchmarks for measuring the performance of algorithms - and which are the best algorithms?"

In recent years, Abbé's group has been able to answer these questions for a class of random graphs used widely in machine learning and statistics. These random graphs are called "stochastic block models" and are useful benchmarks for scientists as they are very insightful and admit a vast collection of practical extensions. Abbé's work in stochastic block model analysis puts forward a research direction bridging information theory and machine learning that has seen various follow-up works in both communities.

Abbé and his co-authors have characterized when the extraction of communities is possible or not for general stochastic block models. Their research improves on decades-long work, obtaining the tight bounds (a.k.a. "fundamental limits") for various community-detection requirements, and developing various algorithms for achieving these bounds.

"Communities are one of the first features of interest in networks, and block models are one of the first network models," says Abbé. "There are many more problems concerned with extracting patterns in graphical data; hopefully the recent developments will give us guidance on these too."

Tracking the math

In a paper published in 2016 (1), Abbé's group first derived the fundamental limit for exact recovery in the two-community case, giving a closed form expression for the limit. The paper further showed that the limit is efficiently achievable, and connected the exact recovery problem to coding theory, thus establishing a new perspective on community detection and graph clustering.

This work was extended in another paper (2) - that was actually published in 2015 - by solving exact recovery in the general SBM, with an arbitrary number of clusters of arbitrary sizes and arbitrary connectivity rates between communities. In particular, it quantified the fundamental limit in terms of the Chernoff-Hellinger divergence, giving a new operational meaning to this mathematical divergence in terms of community detection.

Weaker recovery requirements where the communities are only partially recovered were investigated in another paper (3) in 2017 for the general SBM. The paper proves the optimality of an algorithm that consists in a linearization of the belief-propagation (BP) algorithm from statistical physics, settling a conjecture made by physicists. While it is an open problem to establish rigorous performance guarantees for BP in loopy graphs, the paper obtained a rigorous proof of the optimality of the linearized BP algorithm by connecting it to a spectral algorithm on certain nonbacktracking operators.

These works and the relevant literature are overviewed in References 4, and 5 below.