VANCOUVER, Wash. - As self-driving cars begin operating in cities, a question remains about how to make them work in rural areas with limited telecommunications infrastructure.

New research from Washington State University suggests a potential answer, demonstrating that a small, affordable computer running a compressed large-language model may be an effective decision-maker for autonomous vehicles. The work, carried out on an open-source simulator, also suggests a possible approach for efficiently powering other kinds of applications, such as agricultural robotics.

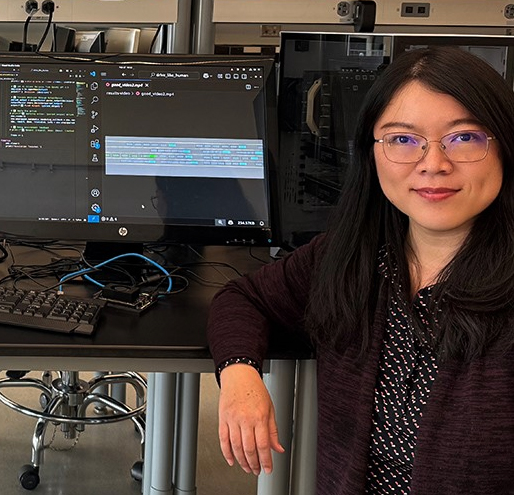

"With autonomous driving, we need to make decisions right away," said Xinghui Zhao, an associate professor of computer science, director of the School of Engineering and Computer Science at WSU Vancouver, and corresponding author of the new publication.

"If you have a super powerful cloud on the back end, you can easily train and improve the perception models to support decision-making in cars, but that's in urban areas where you have a really good connection. If we talk about rural areas, there's not much connection, or maybe the connection is on and off. In that case, you really need the capability to process data on the fly."

The work is part of ongoing research by Zhao and her colleagues into addressing challenges facing self-driving cars in rural areas, funded by the Pacific Northwest Transportation Consortium, a group of researchers and transportation officials funded by the U.S. Department of Transportation. It was presented at Proceedings of the Tenth ACM/IEEE Symposium on Edge Computing.

Self-driving cars remain at the early stages of development; they have begun appearing in a few major cities, and there are a variety of systems in development. There is a great interest in developing the cars as "edge" devices, which can gather, process and analyze data in a self-contained system rather than relying on distant data centers for processing. Such decentralized computing can improve efficiency, lower costs and power usage, and protect privacy.

"There are more and more devices that we can collect data from - a variety of sensors, a small microphone, even a small camera," Zhao said. "And all these devices are collecting data. If we rely on a back-end data center, that means every device needs to send data to the center for processing. More and more, there is a preference for applications that process data on the device where it is collected."

Autonomous vehicles use three primary layers of computing: perception, or collecting and interpreting sensor data from cameras, radar and other sources; reasoning, or real-time analysis of sensor data to choose driving actions; and action, or executing those decisions.

In the new project, the researchers focused on reasoning. Some autonomous-driving systems rely on a form of AI known as deep reinforcement learning, which must be trained with huge amounts of data and which improves over time through trial and error. DRL is costly and can be unreliable when encountering unforeseen scenarios.

If we talk about rural areas, there's not much connection, or maybe the connection is on and off. In that case, you really need the capability to process data on the fly.

Xinghui Zhao, associate professor

Washington State University

Large-language models, on the other hand, excel at higher-level reasoning and can use context to make decisions when encountering new circumstances. But they also have large computational demands, and rely on cloud computing.

"An LLM model is pretty huge," said Ishparsh Uprety, a graduate research assistant and first author of the paper. "If you are going to run that on a car, there's going to be a lot of computational work. We thought: How about we optimize the model and make it smaller?"

The WSU team set out to test the performance of a self-contained LLM model - one in which the data and memory footprints were compressed, which results in faster decision-making, but may lose precision. They used an open-source LLM, Mistral, compressed onto a Jetson Orin Nano, an 8-gigabyte computing module that is smaller than a paperback novel.

Using an open-source platform for testing AI systems, they compared the reasoning of the compressed LLM with that of a full-size ChatGPT model in seven driving scenarios.

The two systems made safe, comparable decisions in most cases, though in one the Mistral version crashed. Given the far smaller computing footprint, the researchers concluded, the initial results suggest that compressed LLMs could eventually be viable for edge computing in self-driving cars - though it will take much more testing before such a system is road-ready.