DURHAM, N.C. -- Even if you can't name the tunes, you've probably heard them: from the iconic "dun-dun-dun-dunnnn" opening of Beethoven's Fifth Symphony to the melody of "Ode to Joy," the German composer's symphonies are some of the best known and widely performed in classical music.

Just as enthusiasts can recognize stylistic differences between one orchestra's version of Beethoven's hits and another, now machines can, too.

A Duke University team has developed a machine learning algorithm that "listens" to multiple performances of the same piece and can tell the difference between, say, the Berlin Philharmonic and the London Symphony Orchestra, based on subtle differences in how they interpret a score.

In a study published in a recent issue of the journal Annals of Applied Statistics, the team set the algorithm loose on all nine Beethoven symphonies as performed by 10 different orchestras over nearly eight decades, from a 1939 recording of the NBC Symphony Orchestra conducted by Arturo Toscanini, to Simon Rattle's version with the Berlin Philharmonic in 2016.

Although each follows the same fixed score -– the published reference left by Beethoven about how to play the notes -- every orchestra has a slightly different way of turning a score into sounds.

The bars, dots and squiggles on the page are mere clues, said Anna Yanchenko, a Ph.D. student and musician working with statistical science professor Peter Hoff at Duke. They tell the musicians what instruments should be playing and what notes they play, and whether to play slow or fast, soft or loud. But just how fast is fast? And how loud is loud?

It's up to the conductor -- and the individual musicians -- to bring the music to life; to determine exactly how much to speed up or slow down, how long to hold the notes, how much the volume should rise or fall over the course of a performance. For instance, if the score for a given piece says to play faster, one orchestra may double the tempo while another barely picks up the pace at all, Yanchenko said.

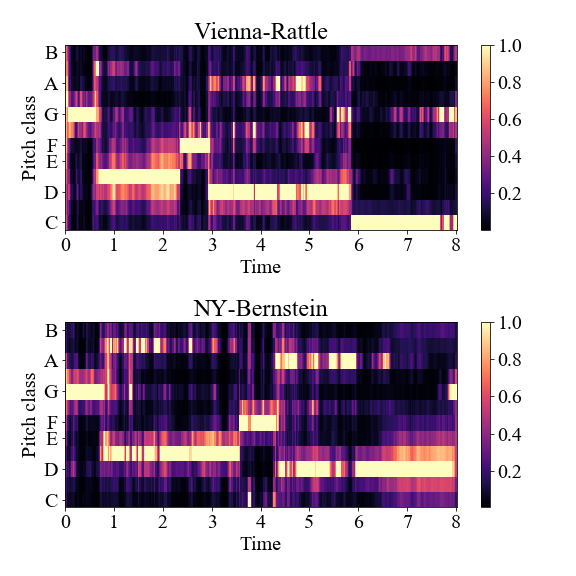

Hoff and Yanchenko converted each audio file into plots, called spectrograms and chromagrams, essentially showing how the notes an orchestra plays and their loudness vary over time. After aligning the plots, they calculated the timbre, tempo and volume changes for each movement, using new statistical methods they developed to look for consistent differences and similarities among orchestras in their playing.

Hoff and Yanchenko converted each audio file into plots, called spectrograms and chromagrams, essentially showing how the notes an orchestra plays and their loudness vary over time. After aligning the plots, they calculated the timbre, tempo and volume changes for each movement, using new statistical methods they developed to look for consistent differences and similarities among orchestras in their playing.

Some of the results were expected. The 2012 Beethoven cycle with the Vienna Philharmonic, for example, has a strikingly similar sound to the Berlin Philharmonic's 2016 version -- since the two orchestras were led by the same conductor.

But other findings were more surprising, such as the similarities between the symphonies conducted by Toscanini with regular modern instruments, and those played on period instruments more akin to Beethoven's time.

The study also found that older recordings were more quirky and distinctive than newer ones, which tended to conform to more similar styles.

Yanchenko isn't using her code and mathematical models as a substitute for experiencing the music. On the contrary: she's a longtime concert-goer at the Boston Symphony Orchestra in her home state of Massachusetts. But she says her work helps her compare performance styles on a much larger scale than would be possible by ear alone.

Most previous AI efforts to look at how performance styles change across time and place have been limited to considering just a few pieces or instruments at a time. But the Duke team's method makes it possible to contrast many pieces involving scores of musicians and dozens of different instruments.

Rather than have people manually go through the audio and annotate it, the AI learns to spot patterns on its own and understands the special qualities of each orchestra automatically.

When she's not pursuing her Ph.D., Yanchenko plays trombone in Duke's Wind Symphony. Last semester, they celebrated Beethoven's 250th birthday pandemic-style with virtual performances of his symphonies in which all the musicians played their parts by video from home.

"I listened to a lot of Beethoven during this project," Yanchenko said. Her favorite has to be his Symphony No. 7.

"I really like the second movement," Yanchenko said. "Some people take it very slow, and some people take it more quickly. It's interesting to see how different conductors can see the same piece of music."

The team's source code and data are available online at https://github.com/aky4wn/HMDS.

CITATION: "Hierarchical Multidimensional Scaling for the Comparison of Musical Performance Styles," Anna K. Yanchenko, Peter D. Hoff. Annals of Applied Statistics, December 2020. DOI: 10.1214/20-AOAS1391