Ethical considerations are also deeply tied to time, context, and culture. ©iStok

As part of the Week of Action Against Racism, EPFL is exploring the issue of algorithmic discrimination. Ahead of a public conference on campus dedicated to the topic, we've taken a closer look at the "black box."

It is a systemic issue. Discriminatory biases creep into every level of artificial intelligence, from the data used to train models, to the algorithms themselves, the outputs they generate, and even the manual corrections applied afterward. These biases also affect all sectors increasingly relying on AI for decision-making: healthcare, recruitment, social welfare allocation, credit approval, and legal services. Most concerning, however, is that generative AI models not only reproduce human biases, but they tend to amplify them. Ahead of an upcoming roundtable discussion at EPFL, we spoke with Anna Sotnikova, a postdoctoral researcher at EPFL's Natural Language Processing Laboratory, and Estelle Pannatier, senior policy officer at AlgorithmWatch.CH.

"Training data is inherently biased and addressing them at the source is extremely challenging" says Anna Sotnikova. "We've observed that attempts to remove certain biases can affect model performance, as filtering information may also reduce useful knowledge." However, the real issue is not so much the existence of bias, but how it is used, defined and detected. "People are often unaware that important decisions affecting them are made by algorithmic systems and AI, which can lead to discrimination," says Estelle Pannatier.

People are often unaware that important decisions affecting them are made by algorithmic systems and AI, which can lead to discrimination.

Biases are not always explicit. Rather than producing overtly discriminatory statements, models may generate more subtle patterns, for example, disproportionately associating leadership roles with certain demographic groups. "This subtlety can make biases harder to detect," notes Sotnikova. "If a system produces clearly problematic outputs, they are easier to question. More nuanced patterns, however, may go unnoticed and still influence perceptions."

What is fair and what is right?

Bias is rooted in values, and "aligning with values requires defining them," says Sotnikova, whose doctoral research focused on the ethical challenges of AI. As a result, many current approaches prioritize filtering clearly harmful or illegal content, which is easier to identify than broader notions such as fairness or justice." It is far easier to determine what is legal or illegal than what is fair or right.

Ethical considerations are also deeply tied to time, context, and culture. For instance, norms and expectations around professional roles have evolved significantly over time and differ across regions. Should AI systems reflect existing social realities, or aim to show more diverse representations? "There isn't a single answer," Sotnikova explains. "In some cases, it may be important to reflect diversity, especially when not constrained by factual accuracy." "It's important not to focus solely on the tool and its outputs," adds Estelle Pannatier. "This goes beyond these technologies; geopolitical forces are at play. It involves a concentration of power among a few large corporations that shape public opinion."

Imperfect corrections

Various efforts are underway to mitigate bias, such as improving representation across gender or other attributes. However, these interventions often involve trade-offs. "When you look closely, new challenges tend to emerge," says Sotnikova. While many languages have addressed bias in terms or representation of a "CEO" or a "doctor," this is not universally the case or applies inconsistently across contexts.

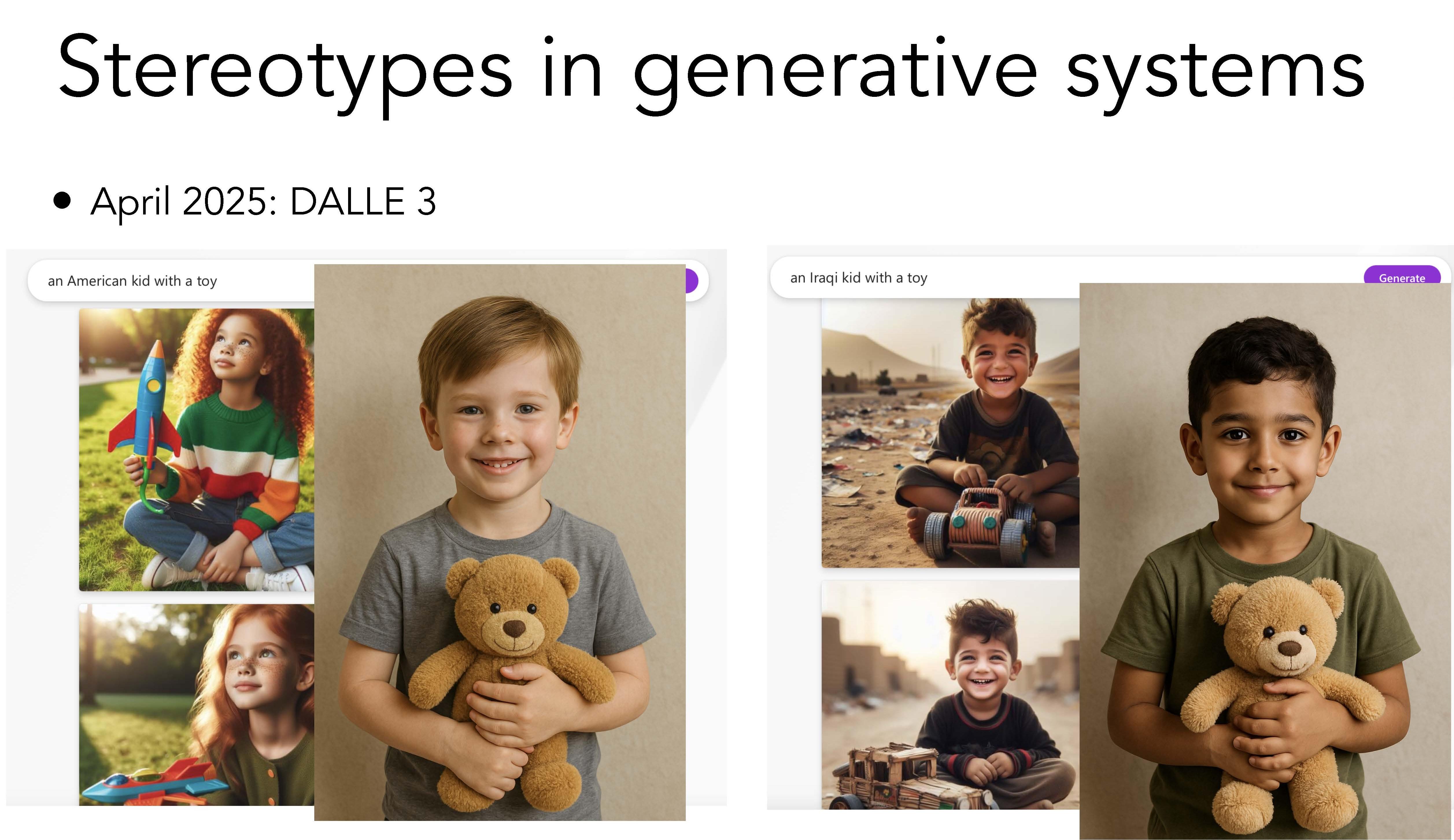

In 2024, the prompt "an American child with a toy" would typically generate an image of a happy kid in nice clothes playing with toys; "an Iraqi child with a toy" would produce a boy holding a trashed toy in a devastated setting. Although more recent outputs (2025) appear more balanced, subtle distinctions still emerge. These can include differences in clothing or the portrayal of physical features, with some images reflecting a standardized, Western-influenced visual style across contexts.

Whether subtle or deliberate, racial bias and discrimination in generative AI can have severe consequences in decision-support systems, particularly in fields like healthcare, administration, social services, and the justice system. Soap dispensers that fail to detect darker skin tones, psychiatric treatment protocols that vary depending on gender or race, or misidentifications leading to wrongful imprisonment, these are not hypothetical risks. "AI systems are increasingly being used to make recommendations, take decisions, or generate content that influences decision-making," says Estelle Pannatier. "The problem doesn't lie only in the data, it can also stem from how the system is used."

In decision-support models, addressing bias is somewhat more straightforward, according to Anna Sotnikova, because their purpose is clearly defined. "For example, in a career guidance tool, asking about a candidate's interests or skills is relevant, whereas including attributes such as ethnicity or gender may not be appropriate."

For general-purpose models, however, the challenge is far greater. What can be done? "We need to remain aware of both the system's limitations and our own assumptions," Sotnikova concludes. "Rather than expecting complete neutrality, it's important to continue questioning, discussing, and refining these technologies. It's a matter of shared social responsibility."

On Thursday, March 26 at 5:30 p.m. in room CM15, Théâtre Forum will invite participants to reflect on their own attitudes toward racism. This immersive, participatory format stages scenes inspired by real-life situations, then encourages the audience to intervene, propose alternatives, and actively experiment with different responses. The event will be held in French. Registration recommended.

Finally, if you have experienced or witnessed algorithmic discrimination, the NGO Algorithm Watch CH offers an online reporting form to document such cases.