Large language models like ChatGPT have grown rapidly in scale, capability and adoption over the last few years. But while many people think of artificial intelligence as the technology that predicts the next word in a sentence, neuroscientists at the University of Oregon are using the same computational architecture to determine the neural circuits underlying behavior.

From guiding treatment of brain disorders to understanding what drives motivation to mapping the visual system during a hunt, UO researchers are using AI as a scientific instrument to understand the brain, the most complex organ of the human body.

For Brain Awareness Week 2026, March 16-22, OregonNews asked three labs in the UO's Institute of Neuroscience to unpack the AI lingo scientists say, including terms like machine learning and neural networks. They also described the kinds of AI tools they use to disentangle the brain's 100 billion neurons and their 100 trillion connections that give rise to everything we are.

More than meets the eye

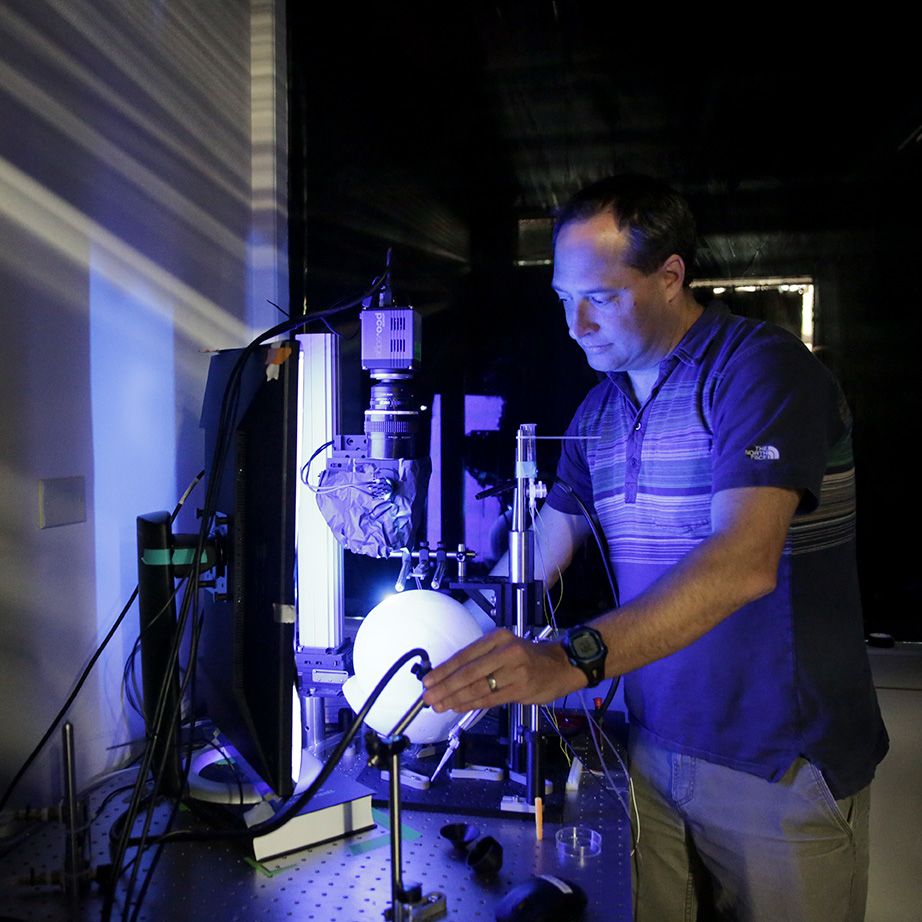

When professor Cris Niell says he studies vision, his work doesn't look like a typical optometrist appointment with rows of letters on a chart. Instead, he observes mice running on a treadmill, jumping across gaps or chasing crickets.

"My lab is trying to figure out how vision works, which really is asking how the brain works when we interact with the world," Niell said. "Like when reaching for a coffee cup or looking for a recognizable face in a crowded room. I often contrast that with the way people have studied vision in the past, which has been more like an eye exam."

Tracking dynamic behaviors produces huge volumes of video footage. And to connect specific behaviors with particular brain activity, each frame needs to be annotated for the animal's position and key body parts, like their limbs, nose and pupils. Traditionally, that entailed an army of student researchers at computer screens, labeling all the points in an animal's pose across hundreds of frames, over many months.

But with AI, the process takes an afternoon, Niell said.

Many modern AI applications are designed with artificial neural networks, which are computer systems inspired by the brain's multilayered architecture of interconnected neurons. Those algorithms enable the model to learn patterns from data, a technique known as machine learning.

Chatbots are made of neural networks that receive and generate text, but neural networks can be trained on any type of data, Niell added. His lab trains its computer models on a small number of manually labeled video frames to automatically track the animal across thousands more. Other machine-learning tools link movements with neural activity recorded from electrodes.

In one AI-assisted study, the team found that mice judged distance across large gaps of four to eight inches by bobbing their head up and down before jumping.

"People tend to think of vision as 'what are you seeing?'" Niell said. "But it turns out the brain's visual cortex also cares a lot about how the head is tilted, where the eyes are pointed and so on. And it was machine-learning methods that allowed us to pull that data out."

Niell is now one of 20 principal investigators in the Simons Collaboration on Ecological Neuroscience, a 10-year international initiative that brings together neuroscientists and machine-learning experts to understand how the brain links perception with action in complex environments.

"There's this huge, unexplored area of how the brain works," Niell said. "The whole goal is to take advantage of the fact that now we can study animals and humans in much richer contexts, partly enabled by AI approaches."

"The whole goal is to take advantage of the fact that now we can study animals and humans in much richer contexts, partly enabled by AI approaches."

Cris Niell

Professor of Biology and Neuroscience

A digital brain to test therapies

Artificial neural networks were originally designed to imitate the brain, replicating the architecture behind natural intelligence to create thinking machines that solve complex problems. That resemblance also means those constructs are the closest computational replicas of the brain, making them handy testing grounds for neuroscience questions.

Adapting principles of the brain to build smarter AI and then, in turn, using AI to study the brain is known as the field of NeuroAI, which has gained traction over the last few years. That two-way exchange sits at the heart of the work of associate professor Luca Mazzucato.

"We are using AI to study AI," Mazzucato said.

Mazzucato came to this work by way of his training in theoretical physics. While studying quantum gravity, he relied on a mathematical model that physicists had long used to understand complex systems with many interacting parts. He later encountered the same model in neuroscience, where researchers borrowed it to construct simple networks that could learn and recall memories. Those applications have since contributed to the development of modern artificial neural networks.

"I was completely blown away and was like, 'Okay, enough with quantum gravity!'" Mazzucato said. "But there's some deeper truth that the same insights in physics can be transferred and applied to the brain. Many people in my lab are former physicists."

Mazzucato uses AI to simulate the brain to test and optimize brain-computer interfaces. Those devices are implantable chips that receive and stimulate neural activity to help compensate for lost motor or sensory control in patients with spinal cord injuries, amputations and neurological disorders like Parkinson's disease.

Current studies of brain-computer interfaces often rely on trial and error in hospital settings, Mazzucato said. He wants to create mathematical models that help bypass that costly, resource-intensive process and guide more personalized designs before stimulating a living brain.

"Our goal is to test and validate all of these ideas on AI," he said, "so that we refine our approach and have targeted interventions from the start."

"There's some deeper truth that the same insights in physics can be transferred and applied to the brain."

Luca Mazzucato

Associate Professor of Biology and Mathematics

Finding the next reward

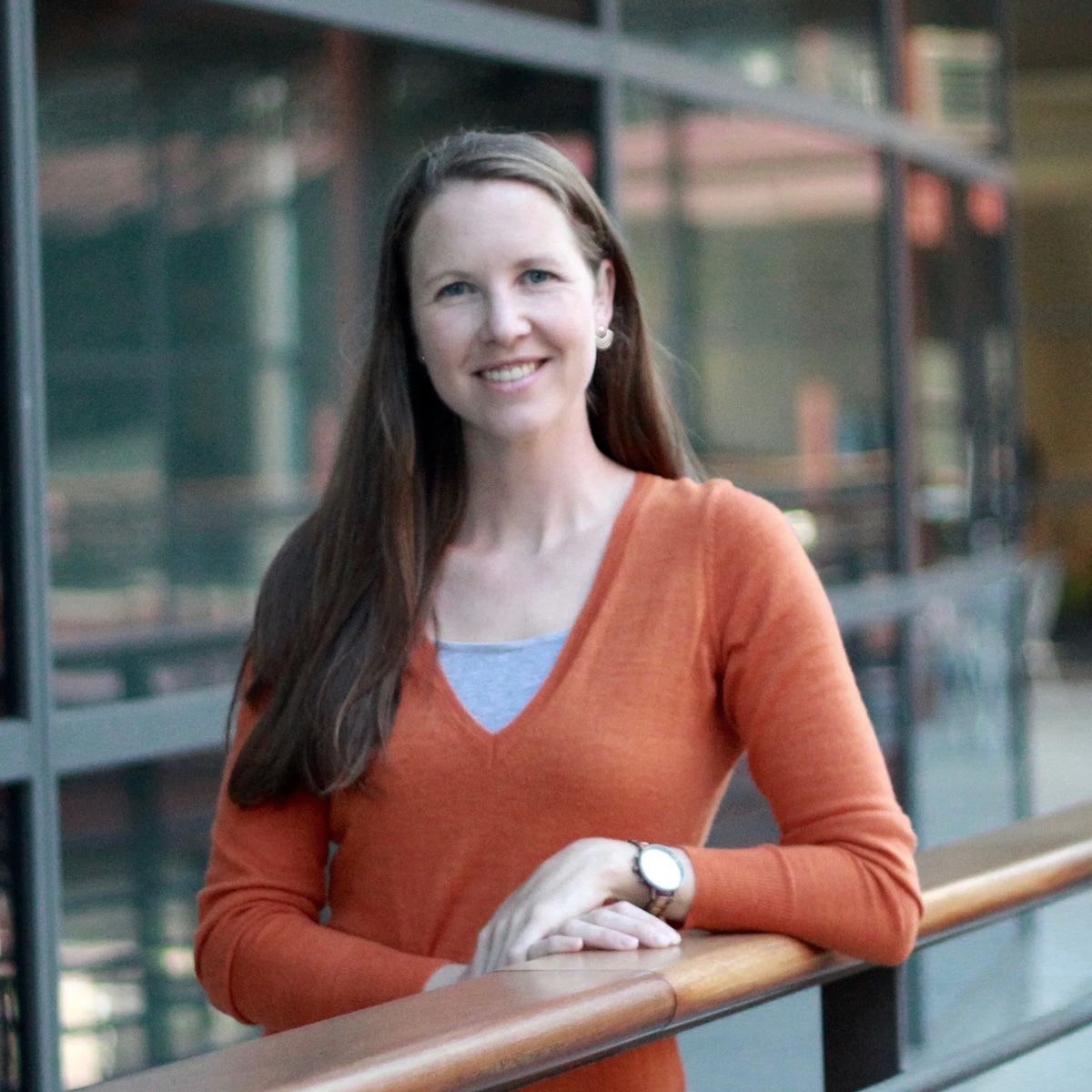

From cracking open a cold one at the end of a long day to getting praise from a colleague, reward drives much of what we do. But the same system that motivates behavior can be pushed into overdrive by addictive drugs or dampened in disorders like depression. Such characteristics of the reward system are what assistant professor Emily Sylwestrak studies.

"My lab thinks a lot about how we set expectations and evaluate outcomes: 'Does this piece of cake measure up to the waiter's hype? Does a movie live up to its trailer?'" Sylwestrak said. "After seeing how strongly the brain responds to disappointment, setting expectations appropriately has become a sort of occupational hazard."

In her research, Sylwestrak investigates which neurons are active during reward-seeking behaviors, like eating, drinking and socializing, and how they work together to evaluate outcomes. Similar to Niell's process, she uses AI to automatically track and label the behaviors and facial expressions of mice, syncing those with recorded brain activities.

"It's important to know which brain cell types to target," Sylwestrak said, "because if you're going to develop a drug to help with neuropsychiatric disorders, you need to know which knobs to turn."

For years, neuroscience experiments in this area were limited to tightly controlled conditions, such as pressing a lever for a reward. That's because analyzing the full range of unconstrained behaviors required data sets too large for manual analysis.

AI allows researchers to measure that complexity instead of filtering it out.

"What I think is so exciting about these tools is that variability is now a feature rather than a bug or limitation," Sylwestrak said.

Although AI can identify interesting behavioral motifs, Sylwestrak emphasized that scientific intuition remains essential.

"AI can't completely replace a curious and excited researcher," she said. "It supercharges the process, but there must be human dialogue with machine-learning-based outputs. I don't see a researcher's own curiosity and the power of observation as obsolete."

And when it comes to AI apps like chatbots that generate content, Sylwestrak reminds her students and next-generation scientists that those tools are designed to find patterns in existing texts and predict the words most likely to follow next.

"In science, we don't want to do the most likely next experiment. We want to do the most interesting or the most fruitful or the most creative next experiment," she said. "If you have AI do everything, it's going to be derivative. Not transformative."

"In science, we don't want to do the most likely next experiment. We want to do the most interesting or the most fruitful or the most creative next experiment."

Emily Sylwestrak

Assistant Professor of Biology and Neuroscience