Do you know what the Apple logo looks like?

Chances are, you think you do. It's ubiquitous and iconic. How could you not know it?

But when tested, it turns out very few people can remember all the features of the logo. One study of 85 people found that only about half could pick the correct logo out of a lineup of similar ones. And only one person could correctly draw it.

This isn't an isolated example. A classic study from 1979 found that people similarly couldn't draw a penny accurately or pick out a correctly drawn penny from incorrect ones.

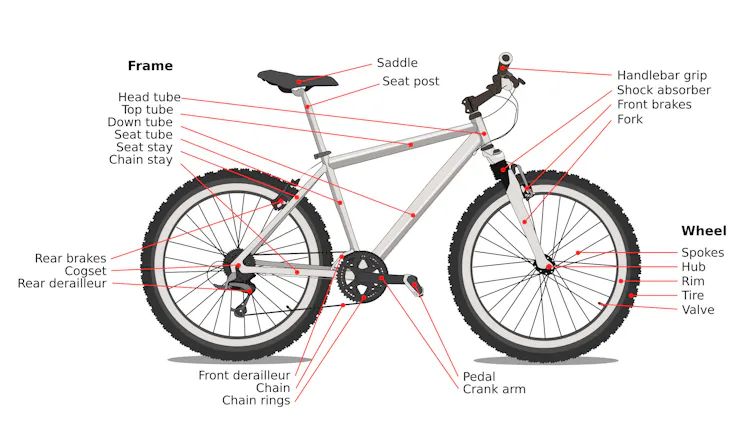

People aren't just bad at remembering things they see all the time, but also in actually knowing how they work. In a 2006 study, many people made significant errors when drawing a bicycle , like putting the chain around the front wheel as well as the back wheel. More than just a forgotten detail, putting the chain around both wheels shows a deeper misunderstanding of how a bicycle works. A bicycle with a chain around both wheels wouldn't be able to turn.

It turns out people's knowledge of how the world works is often fragmented and sketchy at best. They systematically overestimate their understanding of everyday devices and natural phenomena. People will tend to give themselves high ratings on how well they understand something, such as how bicycles or zippers work. But when they're asked to actually explain the mechanics of these objects, their ratings of their understanding typically drop.

Just like how your knowledge of the world around you is imperfect, your knowledge about your own knowledge - also called metaknowledge - is often flawed. My field of cognitive science has been uncovering various gaps in human metaknowledge for decades.

If people are systematically overconfident about how well they understand things, why don't they notice when they don't understand something? And what can people do to better recognize the limits of their own knowledge?

Why you think you know more than you do

Researchers have identified several factors behind people's overconfidence in their knowledge.

One is that people confuse environmental support with understanding : The information is out in the world but not actually in your head. With a bicycle or a zipper, all of the parts are visible to you, and you may confuse this transparency for an internal understanding of how they work. But until you go to use that knowledge by attempting to explain how they work, you may not recognize that you don't understand how those parts interact.

A second factor is confusing different levels of analysis . People can often describe how something works at a very high level. You know that the engine of a car makes the car go, and the brakes slow and stop the vehicle. But confidence in your high-level understanding of the car may bias you to think you also have a good grasp of the finer details, like how the engine pistons and brake pads work.

Additionally, people can be blind to the ways their knowledge shapes their own perception. In one study, researchers had participants tap out the tune to a popular song . On average, the tappers thought listeners would be able to identify the song about 50% of the time. But when listeners had to identify the tapped song, they actually could identify it only 2.5% of the time. The tappers didn't realize how much their knowledge was making identifying the song seem easy to them.

This disconnect has consequences beyond whether someone else can understand your Morse code version of a song. When teaching people, whether in formal classroom settings or through casual mentorship, you can sometimes have an expert blind spot : the inability to recognize the difficulties beginners face when learning something you have expertise in.

Building expertise often involves internalizing knowledge to the point where it becomes invisible to you. You draw on knowledge you don't realize you have, making it hard to relate to learners who lack this knowledge - and, of course, hard for learners to relate to your teaching. You might have experienced this when you've gotten partway through explaining something, only to realize you've been using jargon you forgot isn't common knowledge and lost your listener.

How to address metaknowledge failures

Your metaknowledge can fail in two directions: You can think you know more than you do, and you can be blind to how much you're relying on knowledge you do have. Each calls for a different response to correct it.

When you're overconfident in your knowledge, the remedy is using that knowledge. You'll quickly realize how much you actually understand and dial down your confidence. Challenging yourself to actually try to walk through how something works is a great exercise in intellectual humility - that is, recognizing that you may be wrong - and can keep you from getting out over your skis.

Building a greater appreciation for what you know is more difficult. You can't simply unlearn what you've internalized. But what this challenge shows is that, to some extent, knowing a subject and knowing how to teach it are two separate skills. Some experts are great teachers, but not simply by virtue of being experts. Recognizing that you have to approach teaching with humility, and that your expertise doesn't automatically make you a skilled teacher, can go a long way toward making you a better teacher and mentor.

These aren't easy and quick fixes to failures of metaknowledge. Both require ongoing intellectual humility and a willingness to distrust your own confidence. But acknowledging the fallibility of your own metaknowledge is a good place to start.

![]()

Thomas Blanchard does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.